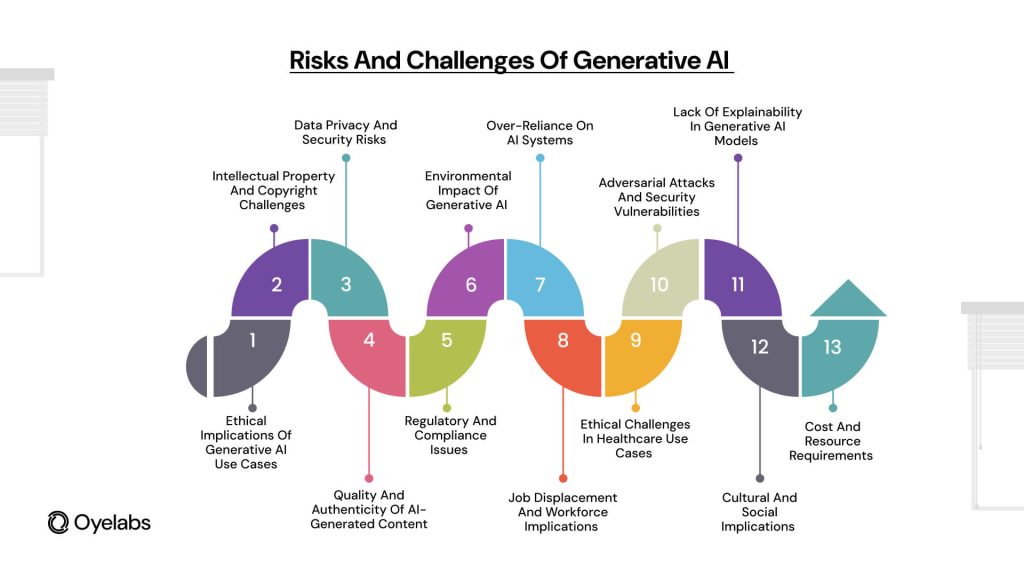

Preface

The rapid advancement of generative AI models, such as GPT-4, businesses are witnessing a transformation through unprecedented scalability in automation and content creation. However, AI innovations also introduce complex ethical dilemmas such as data privacy issues, misinformation, bias, and accountability.

A recent MIT Technology Review study in 2023, a vast majority of AI-driven companies have expressed concerns about ethical risks. These statistics underscore the urgency of addressing AI-related ethical concerns.

The Role of AI Ethics in Today’s World

AI ethics refers to the principles and frameworks governing how AI systems are designed and used responsibly. Without ethical safeguards, AI models may amplify discrimination, threaten privacy, and propagate falsehoods.

A recent Stanford AI ethics report found that some AI models exhibit racial and gender biases, leading to biased law enforcement practices. Implementing solutions to these challenges is crucial for maintaining public trust in AI.

Bias in Generative AI Models

A significant challenge facing generative AI is bias. Since AI models learn from massive datasets, they Fair AI models often inherit and amplify biases.

Recent research by AI adoption must include fairness measures the Alan Turing Institute revealed that AI-generated images often reinforce stereotypes, such as depicting men in leadership roles more frequently than women.

To mitigate these biases, developers need to implement bias detection mechanisms, apply fairness-aware algorithms, and establish AI accountability frameworks.

Deepfakes and Fake Content: A Growing Concern

AI technology has fueled the rise of deepfake misinformation, threatening the authenticity of digital content.

Amid the rise of deepfake scandals, AI-generated deepfakes were used to manipulate public opinion. According to a Pew Research Center survey, over half of the population fears AI’s role in misinformation.

To address this issue, businesses need to enforce content authentication measures, adopt watermarking systems, and collaborate with policymakers to curb misinformation.

How AI Poses Risks to Data Privacy

Protecting user data is Companies must adopt AI risk management frameworks a critical challenge in AI development. Training data for AI may contain sensitive information, potentially exposing personal user details.

Recent EU findings found that many AI-driven businesses have weak compliance measures.

To protect user rights, companies should adhere to regulations like GDPR, ensure ethical data sourcing, and adopt privacy-preserving AI techniques.

Final Thoughts

Balancing AI advancement with ethics is more important than ever. Fostering fairness and accountability, companies should integrate AI ethics into their strategies.

As generative AI reshapes industries, ethical considerations must remain a priority. With responsible AI adoption strategies, AI can be harnessed as a force for good.

Kel Mitchell Then & Now!

Kel Mitchell Then & Now! Jurnee Smollett Then & Now!

Jurnee Smollett Then & Now! Earvin Johnson III Then & Now!

Earvin Johnson III Then & Now! Tina Majorino Then & Now!

Tina Majorino Then & Now! Nicholle Tom Then & Now!

Nicholle Tom Then & Now!